Agentic AI Is an Organisational Design Problem, Not a Technical Programme

- Paul Sala

- 3 days ago

- 13 min read

Transformation leads must lean in with a new perspective on functional alignment, ownership, delivery rhythm, risk management and value capture.

Executive summary

Agentic AI is at risk of being underframed.

That may sound strange, given the volume of noise around it. Every vendor deck now seems to include agents. Every conference keynote has a slide about digital labour.

Every leadership team is somewhere between curious, pressured and slightly worried that they are already behind.

But hype is not the same as framing.

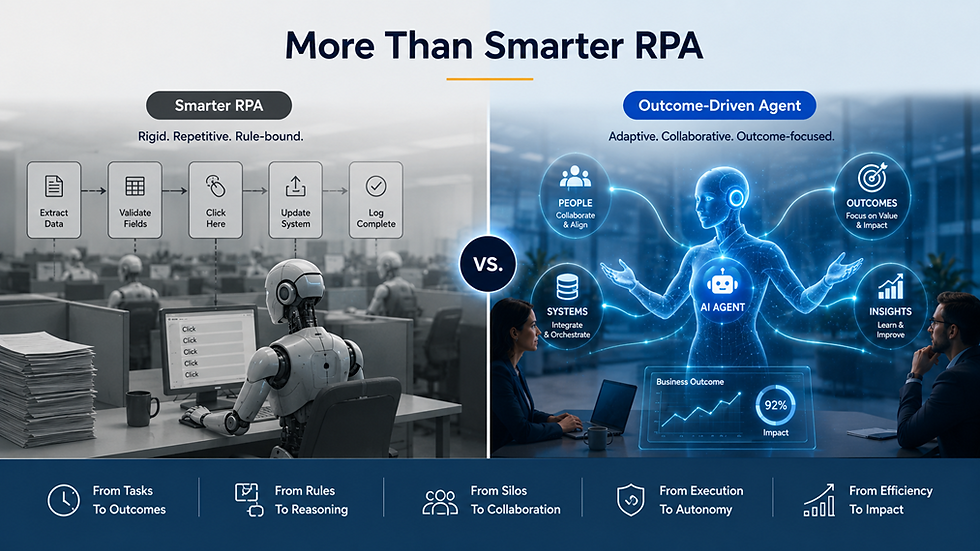

The risk is that organisations look at agentic AI and see something familiar: a smarter bot, a better assistant, a more conversational automation tool, or RPA with better language skills and more access to data.

That view is understandable. It is also too small.

If agentic AI is framed as smarter RPA, the business case will follow the old automation logic. It will focus on hours saved, tasks completed faster, fewer manual updates, reduced cost to serve and productivity gains inside individual functions.

Those benefits are real. They may even be valuable. But they are unlikely to justify the level of transformation required to use agentic AI properly.

And that is where organisations can get themselves into trouble.

They will run pilots. They will find use cases. They will prove some local efficiency. They will build small business cases around small pockets of productivity. Then they will wonder why the promised transformation never arrived.

The issue will not be that agentic AI was overhyped. The issue will be that it was underframed.

The bigger opportunity is not to use agents to automate existing tasks. The bigger opportunity is to use agents to redesign how work moves around business outcomes.

|

Agentic AI is not RPA with better language skills

RPA was built around the task.

It asked sensible questions. Which repetitive steps can be automated? Which screens can be copied? Which rules can be followed? Which handoffs can be accelerated? Which manual updates can be removed?

That logic created value in many organisations, but it also had limits. RPA often automates around the edges of broken processes. It made work move faster without always asking whether the work should exist in the first place. It helped functions become more efficient, but it did not always improve the outcomes that customers, employees, suppliers, or leaders actually cared about.

Agentic AI can do some of those tasks better. It can interpret language, use more context, connect to systems, retrieve information, summarise, draft, recommend, route, and sometimes act.

But if that is all it does, then it is simply the next automation wave.

Useful? Yes. Transformational? Not necessarily.

The larger opportunity is different. Agentic AI should make leaders ask where work gets stuck between functions, where decisions slow down because context is fragmented, where customers or employees experience the organisation as disconnected, where controls, data, ownership, and workflow fail to line up, and where a digital worker could help move work across systems and teams in service of an outcome.

That is not RPA with a new interface. That is a potential redesign of how work is organised.

Organisations are built around functions. Outcomes are not.

Most organisations say they are outcome-driven. Their structures usually tell a different story.

Budgets are functional. Teams are functional. Systems are functionally owned. Governance forums are functional. Controls are functionally managed. Measures are often functional. Career paths are functional. Leadership accountability is functional.

Finance owns finance. HR owns HR. Sales owns sales. Technology owns technology. Operations owns operations. Procurement owns procurement. Legal owns legal. Risk owns risk.

That structure is not stupid. It creates expertise, clarity and control. Nobody sensible wants a free-for-all where every function wanders across every boundary in the name of agility.

But value does not move through the organisation chart.

Customer onboarding may involve sales, legal, finance, compliance, operations and service. Employee onboarding may involve HR, IT, security, facilities, payroll and line management. Supplier resilience may involve procurement, finance, legal, risk and supply chain. Service recovery may involve technology, cyber, operations, vendors, communications and business leadership.

The outcome is cross-functional. The organisation is functional.

That has always been one of the central tensions in transformation. Agentic AI makes it harder to ignore.

A task-oriented agent can make one functional step faster. An outcome-driven agent should be able to help work move across the organisation in the way value is actually created.

But the word “should” matters.

Agents will not magically become outcome-driven because the vendor demo says they are. If they are deployed inside functional silos, measured through functional metrics and governed through functional ownership, they will mostly reinforce the organisation that already exists.

Finance will have finance agents. HR will have HR agents. IT will have IT agents. Sales will have sales agents. Everyone will get faster. The customer may still be stuck.

That is the task-agent trap.

The task-agent trap

Most organisations will start with task-oriented agents. That is sensible.

Task agents are easier to explain, easier to fund, easier to govern and easier to measure. A finance team can build an agent to triage invoice exceptions. HR can build an agent to answer policy questions. IT can build an agent to summarise incidents. Sales can build an agent to update CRM records. Procurement can build an agent to review supplier information.

These are useful applications. They may save time, improve consistency and reduce manual effort.

But they also create a trap.

If every function builds agents around its own tasks, the organisation may simply create faster silos. Finance becomes faster at finance work. HR becomes faster at HR work. IT becomes faster at IT work. Sales becomes faster at sales work.

The enterprise outcome may not improve.

Customer onboarding may still be slow. Employee onboarding may still be fragmented. Supplier risk may still be poorly coordinated. Service recovery may still require manual escalation. Product launches may still stall between teams. Working capital may still be affected by decisions made across sales, finance, operations and procurement.

The agent did its task. The outcome remained stuck.

That is why agentic AI cannot be judged only by local productivity. A task agent may be successful inside its function and still fail to improve the business outcome that matters.

This is where transformation teams need to lean in.

Their role is not to dismiss task agents. Task agents are a sensible place to start. But transformation teams must help leadership avoid confusing local automation with enterprise transformation.

The question should not only be: “Which tasks can agents perform?” It should also be: “Which outcomes are still constrained by the way work moves across functions?”

That is the shift from automation thinking to transformation thinking.

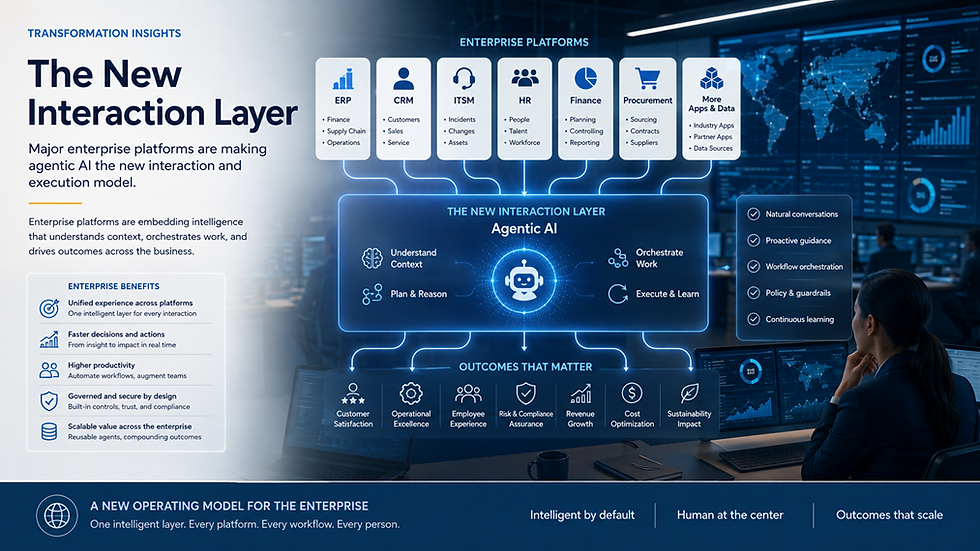

The major platforms are pushing this shift, whether organisations are ready or not

This is not a theoretical debate. The major business system vendors are already pushing agents into the core of enterprise software.

SAP, Oracle, Salesforce, ServiceNow, Microsoft, Workday, Atlassian and IBM are all positioning agents as part of the way work will be triggered, orchestrated, governed or executed across enterprise platforms. The details and maturity vary by vendor, but the direction of travel is consistent.

Vendors are not treating agents as decorative AI features. They are positioning agentic AI as the new interaction and execution model for enterprise systems.

The old enterprise software model was: human logs in, human searches, human interprets, human updates, human routes, system records.

The emerging model is: human or event defines an outcome, agent interprets context, agent uses systems, agent recommends or acts, system records, human supervises exceptions.

That is a big deal for ERP, CRM, ITSM, finance, HR, procurement, service management and process control.

It means enterprise platforms are moving from systems people operate to environments where people and agents execute work together.

Transformation plans can no longer focus only on system implementation, adoption, training, reporting and governance. They now need to address what work becomes agent-led, what remains human-led, what becomes human-agent collaboration, which decisions can be automated, which controls must be embedded and how value is captured across functions.

This is not just a new feature set. It is a new operating-model challenge arising from the enterprise software roadmap.

Software / vendor | Agent name or platform | Agentic pattern |

SAP | Joule / Joule Agents / Joule Studio | Agent-supported enterprise process execution using SAP business context, data and workflows across business functions. |

Oracle | Fusion Agentic Applications | Coordinated teams of specialised agents embedded into Fusion Cloud applications, positioned around enterprise execution across finance, supply chain, HR and related processes. |

Salesforce | Agentforce | Digital labour across sales, service, marketing and customer operations, with agents acting inside CRM context and connected workflows. |

ServiceNow | ServiceNow AI Agents / AI Control Tower | Autonomous or semi-autonomous workflow execution across IT, employee services, service operations and other workflows, with governance and observability as central themes. |

Microsoft | Copilot Studio / enterprise agents | Agents embedded into productivity, collaboration and workflow environments, with growing emphasis on enterprise management and control. |

Workday | Workday AI agents / agent management direction | Workforce-style visibility, ownership and measurement of AI agents alongside people and business processes. |

Atlassian | Rovo / Jira agents / Teamwork Graph | Agents supporting collaboration, project delivery, knowledge retrieval, issue resolution and team execution. |

IBM | watsonx Orchestrate | Orchestration and governance of agents, tools and workflows across systems. |

If the AI agent is an employee, who is the boss?

The phrase “AI employee” can sound gimmicky. It is useful only if we take it seriously.

A human employee does not just get a task. They get a role, a manager, objectives, training, access rights, decision limits, supervision, escalation routes and performance measures.

If an AI agent is given a job to do, it needs the same organisational logic.

But this creates a difficult question: does a cross-functional agent follow the same hierarchy that exists today, or does the organisation need to change?

For simple task agents, the answer may be straightforward. A finance agent can sit under finance. An HR agent can sit under HR. An IT service agent can sit under IT.

But the most valuable agents are likely to cross boundaries.

A customer onboarding agent may touch sales, legal, finance, compliance, operations and service. A supplier resilience agent may touch procurement, finance, risk, legal and supply chain. An employee onboarding agent may touch HR, IT, security, facilities, payroll and line management.

So who owns it?

If one function owns the agent, it may optimise locally. If technology owns it, it may be treated as a platform feature. If everyone owns it, nobody really does. If an outcome owner owns it, the organisation may need to strengthen outcome-based management.

That is the uncomfortable bit.

Agentic AI does not just ask whether the technology can act. It asks whether the organisation knows how to manage work that crosses its own boundaries.

Or, put more sharply: an AI agent can cross the organisation faster than the organisation can govern itself.

That is not a reason to stop. It is a reason to design properly.

Who trains the AI employee?

If the agent owner is the AI employee’s supervisor, does that person also train the agent? Not alone.

A human manager does not personally design the HR system, write every policy, configure every access right, provide every training module and audit every control.

They manage the employee’s contribution inside a wider organisational system.

The same applies to AI agents.

The agent owner should be accountable for the business outcome, the agent’s purpose, the acceptable level of risk and the performance expectation. But training, tuning and improving an agent requires several disciplines.

The business defines the purpose. Process owners define the work. Subject matter experts provide real-world context. Data owners make sure the agent is grounded in trusted information. Technology teams configure and integrate the agent. Risk and compliance define the boundaries. Security controls access. Internal audit tests evidence and control integrity. Transformation connects all of this back to the operating model and value case.

The agent owner manages the work. The organisation trains the worker. Transformation ensures both are aligned with the outcome.

That distinction matters. It stops organisations pretending that the business owner can carry everything, or that technology alone can train the agent safely.

Agentic AI requires organisational capability, not just technical enablement.

The democratisation problem is bigger than agent sprawl

For years, organisations have pushed to democratise automation. The idea made sense. The people closest to the work usually understand it best, so they should be able to improve it.

That principle still matters.

Finance teams understand finance exceptions. HR teams understand employee service patterns. Procurement teams understand supplier issues. IT teams understand service operations. Sales teams understand customer handoffs.

If agent design is kept too far from the business, it will become slow, abstract and disconnected from real work.

But agentic AI raises the risk of democratisation without control.

A locally built workflow may create duplication or inconsistency. A locally built agent can create something more serious: unmanaged digital labour operating across functional, system and control boundaries.

The old problem was shadow IT. The new problem is shadow labour. The bigger problem may be shadow operating model.

Agent sprawl is part of the issue, but it is not the whole issue.

The deeper risks are separation of duties, audit trails, functional leadership boundaries, value capture and operating model drift.

Separation of duties

Most organisations rely on separation of duties to manage risk. One person raises a request. Another approves it. Another fulfils it. Another reconciles it. Another audits it. A cross-functional agent can blur those boundaries. If an agent can read, recommend, update, route, approve and execute, the organisation must ask whether it has accidentally combined duties that should remain separate. This is not just an AI governance concern. It is a core control issue.

Audit trails

Traditional audit trails follow users, approvals, transactions and system logs. Agents complicate that. The organisation may need to know what data the agent accessed, what recommendation it made, what action it took, which human approved it, which system was updated, what policy was applied, what version of the agent was active and whether the action crossed functional boundaries. If agents work across platforms, auditability becomes an operating model design issue, not just a logging feature.

Functional leadership boundaries

Functional leaders are accountable for functional performance. But cross-functional agents may influence outcomes that no single function owns. Who owns a customer onboarding agent that touches sales, legal, finance, compliance, operations and service? Who owns an employee onboarding agent that touches HR, IT, security, facilities, payroll and line management? Who owns a supplier resilience agent that touches procurement, finance, risk, legal and operations? These questions cannot be answered purely through system ownership. They require leadership alignment around outcomes.

Value capture

If an agent reduces work in one function but creates effort in another, has it created value? If it improves speed but weakens control, is that acceptable? If it improves a local metric but damages the customer outcome, who notices? If it creates capacity across five teams, who captures the benefit? Agentic AI will make value capture more complex because work, cost, risk and benefit may be distributed across functions.

Operating model drift

This may be the quietest risk. A local team creates an agent. The agent starts doing work. The work changes. Handoffs change. Approvals change. Data usage changes. People begin relying on the agent. Nobody updates the operating model. Over time, the real organisation and the documented organisation diverge. That is dangerous. Transformation teams need to help leaders see that agentic AI is not just automating the operating model. It may be rewriting it.

Democratisation without chaos

The answer is not to stop functions from creating agents.

That would slow innovation and disconnect agent design from the work itself. It would also be unrealistic. The tools are moving too quickly, the vendor roadmaps are too aggressive and the pressure for productivity is too high.

The answer is governed democratisation.

Functions should be encouraged to identify opportunities, design agent roles and shape use cases. But every agent should sit within a shared enterprise understanding of ownership, decision rights, control requirements, auditability, functional impact and value measurement.

That does not need to become a bureaucratic swamp. In fact, it must not. If governance is too slow, teams will work around it. We have all seen that film before, and the sequel is never better.

But if there is no governance model, agent sprawl will become the next wave of enterprise complexity, and cross-functional agents will start operating faster than leadership can manage.

Agentic AI should be democratised.

But digital workers should not be unmanaged. Controls should not be accidental. Outcomes should not be ownerless. Operating models should not be redesigned by stealth.

Why transformation teams are central

This is where transformation teams need to stop waiting for an invitation.

Agentic AI is not just a technology initiative that will eventually need change management. By the time someone says, “Can transformation help with adoption?”, many of the important decisions may already have been made.

Good transformation teams have spent years building exactly the capabilities agentic AI now requires.

They know how to align functions around a shared objective. They know how to expose unclear ownership. They know how to translate strategy into delivery rhythm. They know how to connect risk management to execution. They know how to track value beyond activity. They know how to build governance that helps work move rather than simply adding theatre. They know how to bring business, technology, data, risk, finance and operations into the same conversation.

Those skills will be put to the test.

Agentic AI will stress every weak point in the operating model: unclear accountability, fragmented data, local optimisation, inconsistent controls, slow decision-making, poor benefits tracking and functional silos.

Transformation teams are not peripheral to this. They are one of the best-positioned groups to address it.

Their role is not to own the AI platform. Their role is to ensure agentic AI is absorbed into the organisation deliberately, safely and in a way that creates measurable business value.

That means helping leadership answer the questions that actually matter: are we using agents to automate tasks, or to improve outcomes? Do our current functional structures support outcome-driven agents? Who owns an agent that crosses multiple functions? What authority does the agent have? Who trains, supervises and improves it? How do we protect separation of duties? How do we preserve auditability? How do we prevent local optimisation from creating enterprise risk? How do we measure value when benefits are distributed across teams? How do we stop the operating model being redesigned by stealth?

These are not technical questions. They are transformation questions.

The stakes are too high to repeat old mistakes

There is a familiar pattern in transformation programmes.

A new technology arrives. The demo is impressive. The business case is optimistic. The pilots look promising. The hard organisational questions are deferred. Functions move at different speeds. Ownership gets blurry. Controls arrive late. Value is difficult to prove. The programme becomes heavier, slower and more political than expected.

Then everyone acts surprised.

Agentic AI is too important for that.

If organisations frame it as smarter RPA, they may get useful task automation, but they should not expect transformation. The prize will be smaller, and the business case may never justify the organisational effort required.

If organisations frame it as outcome-driven digital work, the opportunity becomes much larger. Agents could help improve how work moves across the enterprise, reduce friction between functions, accelerate decisions, improve customer and employee outcomes, strengthen control visibility and create measurable business value.

But that ambition comes with bigger risks.

Outcome-driven agents challenge functional structures, ownership models, hierarchy, decision rights, audit trails, separation of duties and value capture. They force leaders to confront the gap between how the organisation is structured and how value is actually created.

That is why agentic AI is an organisational design problem, not a technical programme.

The technology may arrive through SAP, Oracle, Salesforce, ServiceNow, Microsoft, Workday or another enterprise platform. But the real work will happen in the operating model.

Transformation teams should lean in now.

Their superpowers: organisational alignment, ownership definition, delivery rhythm, risk management and value capture, are exactly what will determine whether agentic AI becomes another underwhelming automation wave or a genuine transformation of work.

The organisations that succeed will not simply deploy more agents. They will professionalise the management of digital workers. They will strengthen outcome ownership across functions. They will protect controls and auditability. They will prevent operating model drift. They will connect agentic AI to measurable business value.

The real question is not whether AI agents can do the work. The real question is whether the organisation is ready to be redesigned around the outcomes those agents are supposed to serve.

Comments